Meshing The Rewards - Part 2

07 Jul 2019Prologue To Meshing The Rewards - Part 2

The Concept Of Potential

Instead of giving static( or fixed) bonus at the moment the state transition has been done, we change to put a little bonus with regards to the states of the world. When you achieve a certain state of the world, you get the bonus, when you unachieve that state of the world, you lose that bonus. Everything balances out nicely.

[mjtsai think]Step further, such intuition should be aligned with Calculus fashion, when it is in some fixed point and approaching to the target, the value(bonus) should be returned in certain proportion in that fixed point, and this return value varies with respect to per changing from one distincet fixed point to its next, might be in the time unit, or quantity in some scale. Such returned value could be the accumulation in either increasing or descreasing fashion.

[Notes::1] We want to give hintsSuppose we are design a system of learning agent to make the socker robot to kick the ball into the door.

If we follow up the prior post, the existing official reward function would give us $+100$ for scoring the goal. Now, we might want to give hints to the system about:

➀how close the robot is to the ball

➁the robot is hitting the ball

➂the ball enters the goalIt depends how detail you’d like your design to simulate a physical socker game, maybe

➃how close the ball is to the goalWe need to express ➀,➁,➂,➃ in terms of the states of the world.

[Notes::2] The potentialAs to the MDP states we already know, you can regard it as the fixed state, the value function returns the value(bonus) that is binding in the state, not the state of the world mentioned above.

So, how close the robot is to the ball is the item we’d like to keep track of, we denote it as the potential.

The already know bonus(the reward) obtained after per state transition is left as it is, we’d like to illustrate the way to mesh the reward function by incorporating the factor of potential.

Change-In-State-Based Bonus

[1] Basic ideaSuppose we are designing the learning agent of socker robot, instead of just giving little bonus as a return every time the robot does a certain thing, we are going to keep track of what the state of the world is.

As we are more close to the state of the world we desire, we are going to obtain reward for it, in contrary to the case we are far away from the state of the world, we are going to lose the reward gained when we are close to this state of the world. Therefore, we should substract the reward off when we move away from those states of the world.

The point is that the change-in-state-based bonus thus obtained would be an increment or decrement in accordance to how close or how far you are subscribed by the state of the world, not the fixed constant.

If the robot is 5 pixels away from the ball to 10 pixels away from the ball, then we might obtain $-5$ of decrement, it depends on how you design, we can also define the bonus as the inverse of distance by $\frac {1}{distance}$, that is to say, if we change from 10 pixels away from the ball to 5 pixels away from the ball, we get $\frac {1}{5}-\frac {1}{10}$=$0.1$; in reverse direction, we lose bonus of $-0.1$.

Such kind of design keeps the account balance in a good shape, it gives positive return as you are approaching the state of world, as you leaves the same state of world, you just lose prior bonus by negating it, you are not just coninue to loop over and over again to get that positive return.

[2] Change-in-state-based v.s. state-based::mjtsai1974Here we are about this question how to tell the difference in between change-in-state-based and state-based bonus. If it is a state-based, it returns bonus of fixed value. To reach this state-based point, a sequence of actions might be taken, then we involve change-in-state-based concern, it could also be a state in MDP.

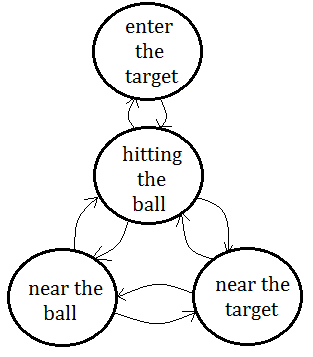

Given that we are developing a robot socker learning agent, the major purpose of the robot is to hit the ball into the target, there must exist a sequence of actions that could be break down:

➀the ball enter into the target, which is the goal of this system

➁the robot should be able to hit the ball

➂the robot should go near the ball

➃the learning agent should evaluate if the ball near the targetI think you can have even more break down items, for the simplicity in this illustration, I come out above 4 items, they are all the already known MDP states.

The point is that we can mesh the reward function by incorporating the potential(the states of world), when the robot is approaching or far away from the ball, the return bonus gets increment or decrement(by negating previous increment). When the ball is near the target, the robot is encouraged by some positive incentive, at the moment the ball is away from the target, the previous returned bonus would be negated and lost.

Above exhibition shows nothing different from already known MDP model. It depends on how you design the learning system. mjtsai think that we should also keep bonus in previous visited states, if we just lose everything learned in current state, at the moment transiting from current to next state, we could not reason for this transition, therefore we should have an appropriate value return as the reward when transiting from current to next state, or such appropriate value might be figured out in accordance to the distance you are away from the ball and that momenmt which you start to speed up to hit the ball.

Potential-Based Shapping: Illustration

[1] Something we already knowWe start from the $Q$ value function already know

[2] add the factor of potential in $R(S,A)$

$Q(S,A)$=$R(S,A)$+$\gamma\cdot\sum_{S’}P(S’\vert S,A)\cdot max_{A’}Q(S’,A’)$We take $\psi(S)$ for all $S$ to be the potential:

[3] The illustration

$R’(S,A,S’)$=$R(S,A)$-$\psi(S)$+$\gamma\cdot\psi(S’)$

, and would like to integrate into the existing Q value function

, next would be the illustration of what the resulting $Q’(S,A)$ in terms of $Q(S,A)$ supposed to be.➀$Q(S,A)$

=$R(S,A)$+$\gamma\cdot\sum_{S’}P(S’\vert S,A)\cdot max_{A’}Q(S’,A’)$

=$\sum_{S’}P(S’\vert S,A)\cdot R(S,A)$+$\gamma\cdot\sum_{S’}P(S’\vert S,A)\cdot max_{A’}Q(S’,A’)$

=$\sum_{S’}P(S’\vert S,A)\cdot (R(S,A)$+$\gamma\cdot max_{A’}Q(S’,A’))$, where we departure from $S$ to $S’$, would definitely obtain $R(S,A)$, and the summation over all weighting averaged by the transition probability would finally leads to $1$.

➁$Q’(S,A)$

=$\sum_{S’}P(S’\vert S,A)\cdot (R(S,A,S’)$+$\gamma\cdot max_{A’}Q(S’,A’))$

=$\sum_{S’}P(S’\vert S,A)\cdot (R(S,A)$-$\psi(S)$+$\gamma\cdot\psi(S’)$

$\;\;$+$\gamma\cdot max_{A’}Q’(S’,A’))$

=$\sum_{S’}P(S’\vert S,A)\cdot (R(S,A)$-$\psi(S)$+$\gamma\cdot\psi(S’)$

$\;\;$+$\gamma\cdot (R(S’,A’)$-$\psi(S’)$+$\gamma\cdot\psi(S^{''})$+$max_{A^{''}}Q’(S^{''},A^{''})))$➂the term $\gamma\cdot\psi(S')$ would be eliminated by the $\gamma\cdot\psi(S')$ contained in $Q'(S',A')$ after it has been further expanded, continue to follow this fashion, we will get all the incoming potential erased.

➃$Q’(S,A)$

[4] We still have the same optimal policy

=$\sum_{S’}P(S’\vert S,A)\cdot (R(S,A)$-$\psi(S)$+

$\;\;$+$\gamma\cdot max_{A’}Q(S’,A’))$

=$R(S,A)$-$\psi(S)$+$\gamma\cdot max_{A’}Q(S’,A’))$

=$Q(S,A)$-$\psi(S)$We do still have the same optimal policy after meshing the reward function by means of potential, since $\psi(S’)$ doesn’t depend on $A’$, the term $max_{A’}Q(S’,A’)$ would not be impacted, and we could safely cancel all the incoming $\psi(S)$ term, for all $S$. Even if we reach the convergent point, only one term $\gamma^{n}\cdot \psi(S^{n})$ would be left, and it might be ignored for $n\rightarrow\infty$.

We have shown up that meshing reward function makes no impact on original optimal policy, a lot reference claim that it might do the little help, where mjtsai think that the final term $\gamma^{n}\cdot \psi(S^{n})$ might be the key factor providing small value of very very tiny quantity.

Why such small value could do the help? The answer might be that after such a long term period convergence, equation of potential-based shapping comes out future information(or knowledge) far far away from current time tick, which could be helpful to evaluate if we should take this action!

[5] A brief reviewWe have explored 3 total different ways of messaingaround with rewards to give us new $Q$ functions that don't actually change the policy:

➀multiply by $c$, where $c>0$

➁add a constant

➂add this potential function $\psi(S)$ for all $S$

$Q$-Learning With Potentials

[1] Mesh reward function in $Q$-learningAbove section illustrates meshing the reward functionn by means of potential in the Bellman equation, in this section, we’d like to carry out this potential function idea in the context of $Q$-learning.

$Q_{t+1}(S,A)$

=$Q_{t}(S,A)$+$\alpha_{t}\cdot (R-\psi(S)+\gamma\cdot\psi(S)$

$\;\;$+$\gamma\cdot max_{A’}Q_{t}(S’,A’)-Q_{t}(S,A))$Suppose you understand this expression of Temporal Difference In Q Form that the state action pair is updated for the state action pair we just left and move a little bit in the direction of the reward plus the discounted value of the state we arrive, minus the value of the state we just left.

This time, we’d like to shift the real reward R around, mesh it with the potential function. So, we take the real reward $R$, substrate the potential we are leaving, and add the discounted potential for the state we end up in.

[2] What will happen?If we run above version of $Q$-learning, it should actually solve the original MDP, which is $(R+\gamma\cdot max_{A'}Q_{t}(S',A')-Q_{t}(S,A))$, since $\psi(S)$ doesn’t depend on action.

[3] What, if $\psi(S)$=$max_{A}Q^{\ast}(S,A)$?If $\psi(S)$=$max_{A}Q^{\ast}(S,A)$, then it already done, and it’s under the condition that we have solved the MDP problem.

Obviously, the equation becomes $Q(S,A)$

=$Q^{\ast}(S,A)$-$\psi(S)$

=$Q^{\ast}(S,A)$-$max_{A}Q^{\ast}(S,A)$We have 2 results:

[4] Potential function might be helpful

➀$Q(S,A)=0$ for the optimal action.

➁$Q(S,A)<0$ for some special usage for each action, or by the amount of local sub-optimal.Above results strike on our head that most often we initialize the $Q$ function to all zeros, because we believe zero is where everything starts, or we just know nothing about it.

Such initialization might start to head for the right direction, or it might take some non-optimal action with negative reward, after that just take another better one. This says that Potential function might be helpful in speeding up learning.

[5] $Q$-learning with or without potential function are the sameEric Wiewiora has proved potential-based shaping and Q-value initialization are equivalent.

This paper says that the series of updates that you get by running $Q$-learning with some potential function is actually the same as what you get by $Q$-learning without a potential function, on conditions that you should initialize the $Q$ function(without a potential) to what the potential function would have been, that is $Q(S,A)$+$\psi(S)$.

The $Q$ function without potential is initialized as $Q(S,A)$+$\psi(S)$ is different from the $Q$ function with potential in the learning process.

Not only does $Q(S,A)$+$\psi(S)$ converges to the same policy, but also going to have the same policy at every state transition in learning.

$\psi(S)$ is just a constant, it doesn't depend on any action, has no impact on original policy, its shift($Q(S,A)$+$\psi(S)$) has an impact on what the $Q$ values are converging toward.

[6] $Q$-learning with or without potential function are the same::proof::mjtsai1974We mesh the reward function by $R(S,A)$-$\psi(S)$+$\gamma\cdot \psi(S’)$, and the potential add-in is refered as $\gamma\cdot \psi(S’)$-$\psi(S)$.

The given $Q$ value function in TD format:

$Q_{t+1}(S,A)$

=$Q_{t}(S,A)$+$\alpha\cdot(R(S,A)+\gamma\cdot max_{A’}Q_{t}(S’,A’)-Q_{t}(S,A))$First express the $Q$-learning with potential in all state transitions:

$Q_{t+1}(S,A)$

=$Q_{t}(S,A)$+$\alpha\cdot(R(S,A)-\psi(S)+\gamma\cdot\psi(S’)$

$\;\;$+$\gamma\cdot max_{A’}Q_{t}(S’,A’)-Q_{t}(S,A))$…[A]Next to express the $Q$-learning without potential add-in and initialize the $Q$ value to the potential $\psi(S)$:

$Q_{t+1}^{'}(S,A)$

=$Q_{t}(S,A)$+$\psi(S)$+$\alpha\cdot(R(S,A)$

$\;\;$+$\gamma\cdot max_{A^{'}}Q_{t}^{'}(S^{'},A^{'})-Q_{t}^{'}(S,A))$…[B]We’d like to prove [A]=[B]

proof::mjtsai1974➀expand from [B]’s delta part

$\alpha\cdot(R(S,A)$

$\;\;$+$\gamma\cdot max_{A^{'}}Q_{t}^{'}(S^{'},A^{'})-Q_{t}^{'}(S,A))$

=$\alpha\cdot(R(S,A)$

$\;\;$+$\gamma\cdot max_{A^{'}}(Q_{t}(S^{'},A^{'})+\psi(S^{'}))-(Q_{t}(S,A)+\psi(S)))$

=$\alpha\cdot(R(S,A)$+$\gamma\cdot\psi(S^{'})$-$\psi(S)$

$\;\;$+$\gamma\cdot max_{A^{'}}Q_{t}(S^{'},A^{'})-Q_{t}(S,A))$

➁expand from [A]’s delta part

$\alpha\cdot(R(S,A)-\psi(S)+\gamma\cdot\psi(S^{'})$

$\;\;$+$\gamma\cdot max_{A^{'}}Q_{t}(S^{'},A^{'})-Q_{t}(S,A))$

=$\alpha\cdot(R(S,A)+\gamma\cdot\psi(S^{'})-\psi(S)$

$\;\;$+$\gamma\cdot max_{A^{'}}Q_{t}(S^{'},A^{'})-Q_{t}(S,A))$We found [A] and [B] they two are the same in the delta part.

➂now only the $Q_{t}(S,A)$ in [A] versus $Q_{t}(S,A)$+$\psi(S)$ in [B], where the $\psi(S)$ is the shift added to all the states $S$ in each transition, and it depends on no action, thus no impact on the original optimal policy.We finally prove that under a broad category of policies, the behavior of these two learners [A] and [B] are indistinguishable.

Potentials Are Just Like Initialization

[1] The interpretation of potentialWe can treat the potential to be $Q$ values initialization.

[2] Random initialization is debatableWe can make the achievement of potential function by means of $Q$ values initialization, we already encountered, guess what?

➀initialize with all zeros.

➁random initialization.When you make initialization with all zeros, this might due to the fact that you don’t know anything about it. All zeros initialization might be useful for the scenario that everything begins from zero, but this might not be the true thing in real world.

As to random initialization, you are asserting that these particular(random) values could not be the correct departuring point, you expect these random values to be the right configuration. Then, why not just beginning with the correct initializaton?

[3] Why not initializedd with the correct value?One reason for random values might be due to that you don’t know, if you always give it all zeros, or some high values versus low values, you are just biasing it.

You shouldn't be randomly initializing $Q$ functions, basically, you are injecting knowledge!!! But, not noise!!!

Knowledge and noise don’t both start within, only noise does. We could regard noise as the beginning of knowledge.

The punchline is that you shouldn't randomly initialize your $Q$ functions, you can actually inject various kinds of knowledge by $Q$ learning with $\psi(S)$ to be all state $S$'s initialization value.

Cautions must be made that potential function might hurts.

Addendum

➀Meshing with rewards, Charles IsBell, Michael Littman, Reinforcement Learning By Georgia Tech(CS8803)

➁Potential-Based Shaping and Q-Value Initialization are Equivalent, Eric Wiewiora, Department of Computer Science and Engineering University of California, San Diego